How AI-Assisted Narrative Threading Supports Fragmented Memorials

The Hallucination Problem in Memorial AI

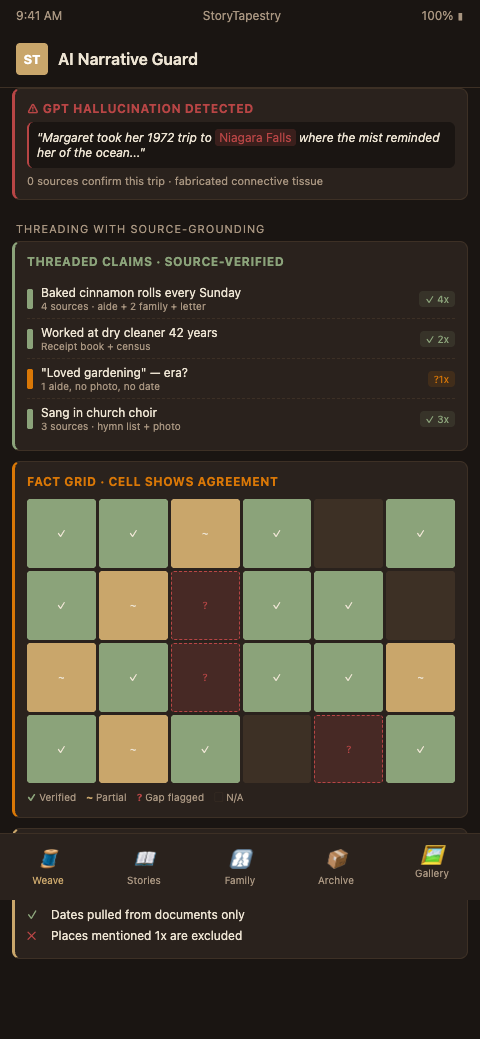

Funeral homes have started adopting ChatGPT and similar artificial intelligence tools. Machine learning systems and NLP-powered writing assistants have moved rapidly into memorial composition and obituary assembly workflows. The Washington Post documented directors using AI to draft obituaries from minimal family input, and tools like Empathy's Finding Words built on GPT-3.5 produce usable first drafts. The outputs are often fluent, and they are also known to hallucinate made-up information into death announcements — phantom careers, invented travel, fictional grandchildren.

For memorial services of dementia patients, this failure mode is worse. The family cannot always tell what is true. Sarah, reviewing her mother Margaret's AI-generated obituary, has no way to verify whether Margaret actually "taught piano to neighborhood children in 1978." The patient cannot correct the record. Caregivers may not remember that far back. Generic AI tools solve the wrong problem: they make text smoother rather than assembling truthful fragments from sources.

The ethical weight is real. A review of digital doppelgangers ethics documents implications of AI clones trained on emails and other personal data, and dementia-family memorial AI sits in an adjacent risk zone. Memorial tooling cannot be a plausibility engine. It must be a threading engine — something that connects verified fragments without generating new ones.

The stakes rise when the decedent cannot validate the output. A living person reviewing an AI-generated bio can reject fabricated details. A decedent with advanced dementia could not have corrected the record in life, and after death the burden falls entirely on the family — who may not know what is true and what is hallucinated. A memorial containing plausible fiction becomes part of the family's inheritance. Children and grandchildren inherit it, tell its stories to their own children, and the fabrication calcifies across generations. This is not a hypothetical risk; it is the actual failure mode of every generic AI tool that does not enforce source attribution at the sentence level.

Narrative Threading That Honors the Source Material

StoryTapestry's AI-assisted narrative threading is architected around a single rule: every sentence in the final memorial must be anchored to a specific source fragment. The model threads, it does not invent. When 14 caregiver accounts contain overlapping details about a decedent's woodworking shop, the AI identifies the shared narrative core, sequences it chronologically, and produces connective phrasing that cites the source. When fragments contradict, the AI surfaces the contradiction rather than smoothing it.

Research on large language model story coherence is improving. GPT-3.5 and GPT-4 match humans on coherence ratings across 4,020 stories, and newer frameworks like SCORE achieve 23.6% higher coherence than baseline GPT. StoryTapestry uses these as foundation models but layers a source-attribution constraint on every generation call. If the model cannot cite at least two source fragments supporting a sentence, that sentence does not appear in the output.

The tapestry metaphor becomes computationally literal here. Fragments are threads of different colors, weights, and textures. The AI's job is to find where threads can be woven together because they converge on a shared event, a shared quality, or a shared period. A memory-care aide's observation that the decedent "hummed the same song every morning" threads with a grandchild's memory of "Grandpa's whistling lullaby" threads with a 1982 cassette tape fragment — and the AI proposes the woven sentence with all three citations attached.

Gap detection is the second critical function. Automated narrative analysis using BERT achieves human-level accuracy on narrative macrostructure, which StoryTapestry adapts to identify where a life story has thin coverage. The system might flag: "career domain 1985-1993 has zero fragments; primary source for this period (former coworker) was not interviewed; recommend outreach to [Name] via LinkedIn." Gaps become action items, not narrative voids to be papered over.

The sensory fragment threading approach integrates tightly. Sensory fragments (smells, textures, sounds) anchor stories in dementia memory work because they persist longer than episodic narrative. The AI threads sensory fragments across accounts — six different caregivers mentioning the decedent's rose garden becomes a structural anchor for the 1990s chapter of the tapestry. This anchoring reduces hallucination risk because the model has concrete shared references to thread around.

Human-in-the-loop review is not optional. StoryTapestry routes every woven segment through family approval before finalization. The family sees the proposed sentence alongside the source fragments and can accept, edit, or reject. This is slower than generative obituary tools but it is the only structure that produces a memorial the family trusts in 5, 10, or 50 years. The fragmented source case study demonstrates this workflow applied to 12 sources and a 40-year life story.

Threading across domains works beyond dementia contexts. Related techniques apply to veteran memorials where 22 comrade accounts across 30 years of service require similar attribution-anchored threading. The underlying architecture — fragment ingestion, attribution constraint, human review — transfers across memorial contexts.

Contradiction detection is a first-class capability. When one caregiver says the decedent was a vegetarian and another says he ate turkey every Thanksgiving, StoryTapestry does not silently pick one and hide the other. The system flags the contradiction, presents both fragments with their source attributions, and routes the decision to the family. The family may resolve it ("he was a vegetarian after his 2003 heart attack") or may preserve the contradiction as meaningful ("he was different with different people"). Both outcomes are valid; the structural point is that the AI surfaces the tension rather than resolving it invisibly. Functional memorial AI must be opinionated about this — hiding contradictions to produce smoother prose is a failure mode, not a feature.

Advanced Threading Architecture Decisions

Constrain the model to operate only on the fragment corpus. Do not give the foundation model access to general world knowledge during memorial generation. If the only facts available to the model are the 127 caregiver, family, and artifact fragments, it cannot hallucinate a 1972 Niagara Falls trip because no source contains one. This requires retrieval-augmented generation configured with strict source boundaries, not the default "use any training data" mode that generic obituary tools rely on.

Surface disagreement explicitly in the UI. When two sources conflict, the AI should present both candidate sentences to the family with source citations, rather than picking one. This treats the family as the arbiter, which matches the lived reality of memory-care bereavement: families frequently hold information that none of the professional caregivers have, and the family's reconciliation is the truth-producing step. Hiding disagreement denies the family this role.

Calibrate confidence scoring on sparse fragments. Late-stage dementia memorials often have narrow fragment sets for entire life decades. The AI should report its confidence per life-period, so the final tapestry shows dense chapters (high-confidence, many fragments) and sparse chapters (low-confidence, few fragments) transparently. Families who see the map of coverage can decide where to invest additional interview effort rather than receiving uniformly smoothed prose that hides thinness.

Build reviewer-trained fine-tuning into the pipeline. Each time a family rejects or edits an AI proposal, the edit becomes training signal for the threading model's behavior on that decedent's tapestry. Over the course of a six-week reconstruction, the model learns the family's narrative preferences, regional phrasing, and relationship dynamics. This is deeply context-specific and should not leak across customers — per-decedent fine-tuning is the architectural separation that prevents cross-contamination.

Log every hallucination candidate the model generated but suppressed. The attribution constraint will block many candidate sentences that the underlying LLM wanted to produce. Logging these — what sentence was attempted, why it was blocked — creates an audit trail that is useful for both family trust (proof the system refuses invention) and regulatory defense if AI-generated memorial content ever falls under consumer-protection scrutiny.

Integrate caregiver-facing simplification. When collecting fragments from late-stage caregivers who are themselves exhausted or emotional, the AI can simplify prompts in real time. "Tell me about a morning you remember with Margaret" beats "Describe a specific episodic memory from the patient's daily routine." The threading model can rephrase structured prompts into caregiver-accessible language without losing the fragment-collection discipline.

Build a versioned audit history. Every thread in the tapestry should have a change history — which fragments were added when, which were edited, which were removed, and why. Families returning to the tapestry months or years after the memorial may want to revisit decisions or add new sources that emerged later. The audit history makes this extensible rather than destructive; new information threads alongside old rather than overwriting it. This also protects against accusations of memorial manipulation — if a family member later claims "this wasn't what we agreed to," the versioned history resolves the dispute with evidence. The same architectural discipline underpins AI comrade matching in veteran memorial work, where attribution-anchored threading across decades of comrade accounts demands exactly the same versioning backbone.

Start Threading Smarter

Generic LLM obituary tools will give you fluent text and occasional embarrassing hallucinations. StoryTapestry gives you a defensible threading workflow that families trust and that you can explain to regulators, memory-care partners, and grieving families. We can run a threading demo on a sample fragment set in 20 minutes — bring three to five caregiver accounts and we will show you the output alongside the attribution trail. You will see immediately why this is different from the obituary AI your competitors are using. The demo walks through source-attribution retention, confidence scoring on reconciled threads, contradictory-account handling with both versions preserved, and the audit history you can surface to a family member who later questions a memorial decision.

Firms that continue past the demo receive a 45-day pilot on two to three active cases, platform access for your two primary obituary writers, and a threading specialist who joins the first family review meeting. We include a comparison report that runs your current obituary process side-by-side with the StoryTapestry workflow on a matched historical case, so your team sees the fragment-retention delta before committing. Pilot onboarding typically begins within five business days of the demo, and most firms ship their first threaded memorial inside 30 days. Bring your lead writer, your aftercare coordinator, and any facility partner curious about attribution — the demo call produces a three-role next-step list you can act on that week.