Measuring Emotional Impact of Infant Loss Memorial Engagement

The Measurement Gap in Infant Loss Memorial Programs

A bereavement coordinator at a 600-bed academic medical center compiles an annual report for leadership. Her metrics include number of families served, keepsake boxes distributed, memorial events held, and a five-question parent satisfaction survey. The satisfaction response rate is 23%. The scores are uniformly positive. Leadership approves program continuation. The program has no data on whether families experience fewer complicated grief outcomes, whether sibling engagement correlates with parent wellbeing, or whether specific memorial interventions produce different outcomes than others.

The systematic review cataloging 31 unique grief measurement instruments after traumatic loss reveals the measurement options available to hospital programs. The Inventory of Complicated Grief, Revised, is the most common. The Perinatal Grief Intensity Scale, validated with a Cronbach's alpha of 0.75 and explaining 65% of variance, offers perinatal specificity. A systematic review of instruments measuring grief after perinatal loss catalogs both measurement tools and the factors associated with grief trajectory.

The measurement gap is not a lack of instruments. It is a lack of implementation infrastructure. Hospital bereavement programs rarely have the staff bandwidth to administer the PGIS at baseline, 6 months, and 12 months. They rarely have the analytic capacity to correlate memorial engagement data with grief outcomes. They rarely have the technology to unify engagement analytics with clinical measures in a single reporting pipeline. Without this infrastructure, emotional impact remains rhetorical.

The StoryTapestry Framework for Measuring Memorial Emotional Impact

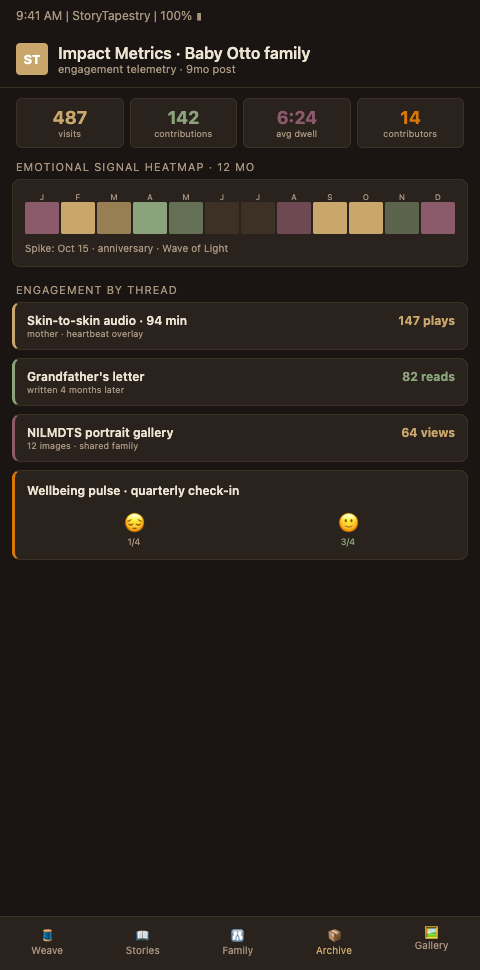

StoryTapestry measures emotional impact the way a master weaver assesses a completed tapestry: through multiple dimensions examined together, not through a single thread pulled for inspection. The framework combines three measurement layers that produce defensible evidence of program effectiveness.

Layer one is validated grief instrument administration, supported by the same data architecture that drives hospital program scaling across networks. Every family entering the StoryTapestry program completes the Perinatal Grief Intensity Scale at baseline (within 2 weeks of loss), 6 months, and 12 months. The PGIS validation research demonstrating Cronbach's alpha of 0.75 and 65% variance explained establishes psychometric rigor. Administration happens inside the platform through brief check-ins that require 8-12 minutes of parent time, replacing lengthy paper surveys that rarely return. The PGIS research predicting intense grief at 12-18 months post-loss allows programs to flag families who may benefit from additional clinical support.

Layer two is tapestry engagement analytics. The platform captures contributor count, entry count, modality distribution, prompt completion rates, and return visit cadence. These are not vanity metrics. The pattern of engagement predicts outcomes. Parents whose tapestries include 15+ contributors show different 12-month grief trajectories than parents whose tapestries stall at 3 contributors. Parents who return to the tapestry monthly in the first six months demonstrate different integration patterns than parents who create and abandon. When tapestry engagement analytics are plotted against PGIS scores, patterns emerge that guide clinical intervention.

Layer three is clinical event tracking. Bereavement counselors log clinical events in the platform: crisis calls, therapy referrals, medication consultations, support group attendance. This third layer allows correlation between memorial engagement, grief measures, and clinical utilization. Programs discover that parents with robust tapestry engagement reduce emergency crisis calls by measurable margins while maintaining appropriate ongoing therapy relationships.

The tapestry metaphor matters for measurement because no single thread tells the whole story. A parent with high PGIS scores at 6 months may be weaving a tapestry with unusual depth that predicts integration by 12 months, or may be stalling at a difficult thread that needs clinical attention. Measurement without the weaving context misreads the pattern. The platform surfaces both dimensions together.

The Perinatal Grief Scale, widely translated across languages, supports cross-cultural measurement in diverse hospital populations. The JOGNN research showing PGIS reliably predicts intense grief in subsequent pregnancy enables programs to identify families who would benefit from subsequent pregnancy support before they re-enter prenatal care. Both capacities extend the measurement framework beyond initial memorial creation.

The three-layer architecture has a practical consequence for program reporting. A bereavement coordinator preparing her annual report to leadership can produce a five-page document with three sections: clinical outcomes (PGIS trajectories and ICG-R scores), engagement patterns (tapestry depth and return visit cadence), and clinical utilization (crisis calls, therapy referrals, support group attendance). Each section is visual, quantified, and comparable across years. Leadership receiving this report responds differently than leadership receiving a satisfaction survey summary. Finance committees see the utilization reduction. Quality committees see the clinical improvement. Board members see the engagement patterns that indicate the program is reaching the families it intends to reach. This reporting structure is not window dressing; it is the evidence infrastructure that protects the program across budget cycles and leadership transitions.

Measurement also creates a feedback loop with program design. When a coordinator notices that parents whose tapestries contain photographs of physical keepsakes show different grief trajectories than parents whose tapestries contain only written entries, the observation can inform the program's keepsake photography protocol. When anniversary engagement spikes correlate with reduced crisis calls in the following month, the program can invest more in anniversary touchpoint infrastructure. Measurement without design feedback is reporting; measurement with design feedback is program improvement. Programs that build the feedback loop explicitly — quarterly reviews where the coordinator, a biostatistician, and a quality improvement specialist examine patterns and propose protocol changes — mature faster than programs that treat measurement as compliance.

Programs building measurement infrastructure benefit from adjacent patterns. Hospital program scaling establishes data standards that make multi-site measurement coherent. The contributor thread case study demonstrates how engagement analytics translate into documented family experience. Parallel work on memorial impact measurement in memory care contexts offers transferable measurement protocols that adapt to perinatal specificity.

Advanced Tactics for Rigorous Memorial Program Outcome Measurement

Programs committed to evidence-based impact measurement can adopt four advanced tactics that distinguish rigorous measurement from performative reporting.

Build a cohort comparison structure. When your network includes multiple hospitals, randomize the memorial intervention rollout across sites with appropriate ethical review. Sites that adopt StoryTapestry in quarter one can be compared to sites that adopt in quarter three, with a contributor thread case study illustrating what deep engagement looks like in practice. This natural experiment produces defensible comparative data without denying any family memorial care. Without a comparison structure, positive outcomes cannot be attributed to the intervention rather than to secular trends.

Separate process measures from outcome measures. Process measures include tapestry completion rates, keepsake distribution, event attendance. Outcome measures include PGIS scores, ICG-Revised scores, return to work timelines, and relationship stability indicators. Too many programs conflate the two. High process numbers with unmeasured outcomes is not evidence of impact.

Collaborate with institutional research infrastructure, applying memorial impact measurement patterns already validated in memory care settings. Academic medical centers have biostatisticians, IRBs, and research infrastructure that can formalize memorial program measurement into peer-reviewed research. Community hospitals can partner with affiliated academic centers. Research infrastructure provides both measurement rigor and publication pathways that build program credibility with leadership and funders.

Publish results internally and externally. Internal publication means quarterly reports to hospital leadership with defined metrics, trend lines, and action recommendations. External publication means conference presentations and peer-reviewed journal submissions. Programs that publish survive leadership transitions and budget cycles. Programs that rely on rhetorical impact descriptions do not.

Build capacity for subgroup analysis. Aggregate PGIS scores are useful but can mask important patterns. A program's 12-month PGIS mean may be unchanged year over year while the program has simultaneously improved outcomes for 28-week-and-later losses and worsened outcomes for first-trimester losses. Subgroup analysis — by gestational age, by loss type (stillbirth versus NICU death versus miscarriage), by socioeconomic status, by primary language, by parity — reveals where the program is working and where it is underserving specific populations. This kind of analysis requires sample sizes that many single-site programs cannot reach alone; it is another argument for cross-site data aggregation inside a network.

Respect the emotional cost of measurement on families. Administering the PGIS at baseline, 6 months, and 12 months is useful research; it is also three reminders in a year that a program considers this family's grief a research subject. Coordinators should frame measurement as service delivery — "these questions help us check in on how you are doing and let us know if anything has changed" — rather than as data collection. The administration itself should occur in the platform with a bereavement coordinator available for debrief if any response raises concern. Families who decline measurement should be honored without pressure, and the program's reporting should note the decline rate transparently rather than hiding it behind the completed measures.

Plan for the families who do not want measurement at all. Some bereaved parents do not want their grief quantified by any instrument. Their refusal is not program failure; it is appropriate autonomy. Programs that honor the refusal and continue to provide memorial infrastructure at full quality — with no reduction in service, no implicit disapproval, no exclusion from community pathways — earn the trust that eventually allows some of those parents to engage with measurement six or twelve months later. Programs that tie service to measurement participation lose those families entirely and skew their outcome data toward the subset willing to be measured, which is rarely the subset with the deepest grief.

Measure the Emotional Impact of Your Memorial Program With Rigor

Hospital bereavement programs that want to move from anecdotal evidence to defensible outcome measurement can adopt StoryTapestry's integrated measurement framework. The platform administers validated grief instruments, captures engagement analytics, and integrates clinical event tracking into a unified reporting pipeline. Our research partnerships team works with hospital programs to establish measurement baselines, design comparison cohorts, and structure reports for leadership and external publication.

The measurement infrastructure consultation includes a gap assessment of your current reporting capacity, a recommended measurement stack tailored to your program's clinical context, and a 12-month implementation roadmap that phases in instrument administration, engagement analytics, and clinical event tracking without overwhelming your existing staff. The consultation also identifies whether your institution is positioned to pursue research publication, and if so, which biostatisticians, IRB members, and academic partnerships can accelerate that pathway.

If your current impact measurement rests on satisfaction scores and thank-you cards, request a measurement infrastructure consultation to see what rigorous outcome evidence looks like in practice. Bring your bereavement coordinator, a quality improvement specialist, and — if possible — a member of your institution's research infrastructure to the consultation. The combination of clinical, quality, and research perspectives in the same conversation produces an implementation plan that can actually deliver defensible outcome evidence rather than a measurement project that stalls because no one role held all the pieces.