Measuring Comrade Engagement Rates in Collaborative Veteran Memorials

The Measurement Gap in Collaborative Veteran Memorials

A regional funeral group runs 240 veteran memorials annually across six locations. Each memorial invites anywhere from 15 to 80 comrade contributors through unit email lists, VFW posts, and Facebook groups. When the operations director asks which location's memorial program produces the most comrade engagement—and which struggles—nobody can answer. The coordinators have anecdotes. The reporting system tracks visit counts and obituary page views but nothing about the collaborative layer that defines veteran memorials.

This measurement gap has real consequences. Peer-reviewed research on Facebook memorial pages and social capital shows that memorial engagement metrics vary dramatically based on platform, contributor relationship, and memorial design—yet funeral industry dashboards treat memorial pages as static obituary replicas. PMC review of online bereavement interventions documents that participation patterns correlate with therapeutic outcomes for grievers, meaning engagement is not a vanity metric—it's a clinical indicator.

The arXiv "Tides of Memory Digital Echoes" study applied topic modeling, sentiment analysis, and engagement metrics to digital memorials and found systematic patterns that only emerge with structured measurement. Taylor & Francis typology work on CemTech platforms similarly documents that memorial platform engagement varies by design choice in measurable ways. Without metrics, program coordinators cannot tell whether their memorial approach activates comrade networks or leaves them cold.

For Veteran Memorial Programs specifically, comrade engagement matters more than generic visitor metrics. A memorial with 5,000 page views but zero comrade contributions has failed to capture the military community voice that defines authentic veteran memorials. A memorial with 800 page views and 24 comrade contributions has succeeded, even if the raw traffic number looks smaller.

The Comrade Engagement Metric Framework

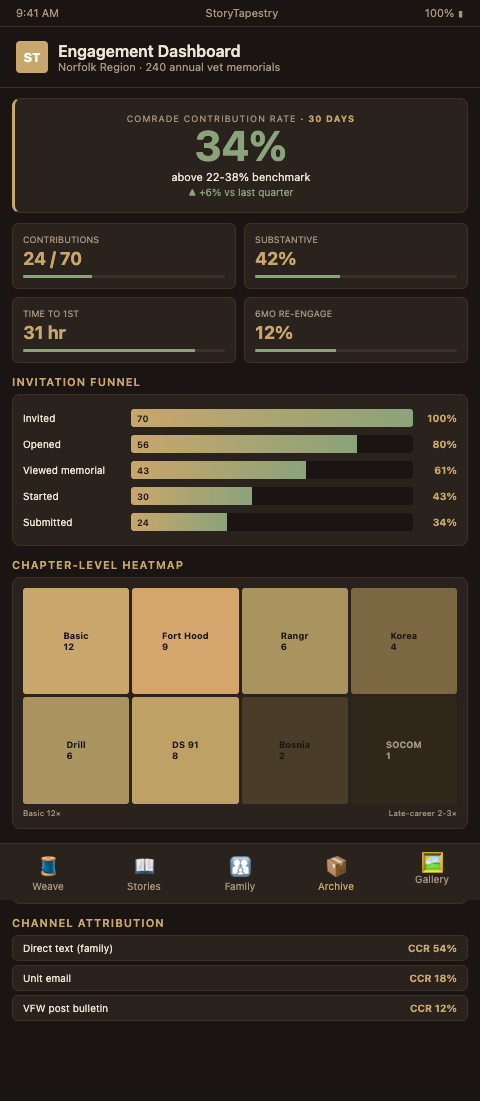

StoryTapestry's engagement measurement framework tracks the weaving of the tapestry itself, not just the traffic past it. The core metric is Comrade Contribution Rate (CCR): contributions received divided by contributors invited, expressed as a percentage within 30 days of memorial launch. A CCR of 30% on a memorial inviting 60 comrades means 18 comrades contributed a thread to the tapestry.

TAPS research on peer-based connection in military grief found that military survivors engage with peer contributions at measurably higher rates than civilian grievers engage with non-peer content. This peer-specificity shows up in memorial metrics when you instrument them correctly. Comrade contribution should be tracked separately from family contribution, because the two cohorts behave differently and serve different memorial functions.

Contribution depth metrics. Beyond raw contribution count, measure the substance. Categorize contributions as micro (single sentence or emoji reaction), short (single paragraph), or substantive (multi-paragraph story, photo with caption, or audio clip). The depth mix is diagnostic. A memorial with 80% micro contributions has activated participation but failed to extract narrative. A memorial with 40% substantive contributions has produced tapestry-quality threads.

Chapter-level engagement. Instrument each chapter of the memorial tapestry separately. If the Basic Training chapter receives 12 contributions and the post-retirement chapter receives zero, the coordinator knows which contributor cohort was under-activated. PMC mixed-methods research on online discussion engagement validates chapter-level instrumentation as a best practice for structured online contribution platforms.

Cross-contributor interaction. Does contributor A respond to contributor B's thread? Do they confirm shared memories, add context, or spark conversation threads? Cross-contributor interaction is a leading indicator of memorial vitality. Memorials where contributors engage each other produce richer tapestries than memorials where contributors each add isolated threads. The Taylor & Francis participation pattern research frames this as the collaborative-vs-parallel distinction and finds collaborative patterns correlate with longer-lived memorial engagement.

Invitation-to-contribution funnel. The funnel stages—invitation sent, invitation opened, memorial page viewed, contribution started, contribution submitted—let coordinators diagnose where comrades drop off. A funnel that shows 80% invitation open rate but 15% contribution rate reveals a content problem: comrades see the invitation but the memorial experience fails to convert viewing into contributing. This mirrors the memorial impact measurement pattern used in bereavement program analytics.

Longitudinal engagement. Measurement does not stop at 30 days. Six-month and one-year anniversary engagement reveals whether the memorial sustains its function as a community touchpoint. Veteran memorials specifically benefit from anniversary instrumentation because unit reunion patterns and deployment anniversary dates trigger predictable engagement waves.

Benchmark ranges from aggregated StoryTapestry data. For funeral homes running StoryTapestry-instrumented memorials, typical ranges across veteran memorials: CCR of 22-38% within 30 days, substantive contribution mix of 25-45%, chapter engagement distribution skewed toward early-career chapters (Basic, first duty station) by 2-3x over late-career chapters, and six-month anniversary re-engagement of 8-15%. Memorials outside these ranges warrant coordinator review for multi-branch program scaling issues, contributor pool miscoding, or invitation workflow breakdowns. The comrade account case study documents one exemplar at 32% CCR.

Advanced Engagement Analytics Tactics

Cohort segmentation separates signal from noise. A 25% CCR on a memorial inviting only family is a different diagnosis than 25% CCR on a memorial inviting 80% comrades. Segment invitations by relationship type (unit peer, family, friend) and report CCR per segment. The comrade segment is the KPI that matters for Veteran Memorial Programs; family segment CCR is a secondary metric.

Invitation channel attribution tells coordinators which outreach methods actually produce contributions. Tag each invitation with its source (unit email list, VFW post bulletin, Facebook group, direct text from family member) and measure CCR per channel. Most programs discover that direct-text-from-family produces 3-5x higher CCR than broadcast email—data that reshapes outreach strategy for the next memorial.

Time-to-first-contribution measures how quickly the memorial activates its contributor network. Memorials with the first substantive contribution within 48 hours of invitation tend to produce richer final tapestries than memorials where the first substantive contribution arrives on day 12. This velocity metric predicts final tapestry quality and lets coordinators intervene (personal outreach to key potential contributors) when velocity lags.

Contributor retention across memorials reveals the network effect. When a comrade contributes to one veteran's memorial at your funeral home and later contributes to a second veteran's memorial, that retention is a leading indicator of community network formation around your program. Cross-memorial contributor retention of 12-18% is a healthy Veteran Memorial Programs benchmark.

Sentiment trajectory per chapter surfaces emotional arcs. Using sentiment analysis, you can see whether early-career chapters skew positive (Basic training humor, first-unit camaraderie) while mid-career chapters carry heavier emotional weight (combat deployment, loss of unit members). Sentiment instrumentation produces narrative maps that help coordinators curate and sequence memorial chapters for family review.

Finally, the framework supports explicit reporting to families. A post-memorial engagement summary delivered two weeks after the service gives the family a tangible accounting of the community response: 34 comrades contributed, spanning 1974-2002 service years, with 67% substantive depth, and 4 contributors connected after decades out of touch. Families tell funeral home leadership this report is the most meaningful bereavement follow-up they receive.

Instrument Your Veteran Memorial Program

Veteran Memorial Programs operating without structured engagement metrics are running blind on the dimension that defines their service quality. StoryTapestry's built-in engagement dashboard tracks Comrade Contribution Rate, chapter-level breakdowns, funnel stages, and longitudinal re-engagement out of the box. Request the engagement analytics walkthrough with a StoryTapestry product specialist to connect memorial data to your operations reporting. The regional funeral group in Norfolk can finally answer which location runs the best veteran memorial program. The walkthrough runs 45 minutes and covers the engagement dashboard, the Comrade Contribution Rate calculation methodology, chapter-level and branch-level breakdowns, the five-stage contributor funnel from invitation through post-memorial re-engagement, and a live demo on anonymized sample data from a comparable regional funeral group.

Pilot engagements include dashboard onboarding for your operations reporting lead, a supervised configuration pass on your first three active veteran memorial cases, and a 60-day instrument calibration review against your baseline service-quality reporting. Most programs begin running the dashboard on active memorials within 10 business days of the walkthrough. Bring your operations director, one memorial coordinator, and your data or reporting analyst — the walkthrough produces a dashboard configuration plan the three of them can deploy before the next monthly operations review.