How to Audit a Full Field Trip Day for Learning-Goal Leaks

What Leaves With the Last Bus

At 3:15 PM, the last school group buses out. Your floor team debriefs for 12 minutes. Someone mentions that "the Water Cycle exhibit got skipped a lot again." Someone else notes that the 1:00 PM group seemed distracted and didn't engage well with the Circuits station. The docent who was near the Erosion table all morning says third-graders didn't figure out the mechanism. These observations are accurate. They are also incomplete, unquantified, and already competing with each other for the limited attention of a staff that spent six hours managing wave pressure.

By tomorrow morning, the useful signal in that debrief will have been discarded with the exit surveys.

A full field trip day audit changes what leaves with the last bus. Rather than informal debrief impressions, the audit produces a structured data record: per-station bypass rates by group, per-session wave-pressure logs, chaperone script execution notes, and learning-goal coverage mapping for each of the six groups. That record is the evidence base for exhibit redesign, grant reporting, and scheduling adjustments — none of which can be built from a debrief summary.

Research on novel field trip phenomenon (Academia.edu) establishes that adjustment to novel settings actively interferes with task learning — meaning that even a well-designed exhibit will underperform with first-time visitors due to environmental novelty. A learning-goal leak audit distinguishes that novelty effect from structural exhibit failure by tracking which bypass patterns persist across return-visitor groups.

The Educational Value of Field Trips (Education Next), a randomized controlled trial of 10,912 K-12 students, confirms that younger students gain the most from field trips — and that audit frameworks expose the leak points where that potential gain is lost. Third-graders have the most to gain and the shortest attention windows; audit data that reveals which stations they bypassed is the highest-priority design input for a children's museum.

The word "leaks" is precise. A learning-goal leak is not a failure — it's a gap between a designed learning opportunity and the conditions under which that opportunity is delivered. A well-designed Water Cycle exhibit can have a significant learning-goal leak if the 30-kid wave arriving at 10:15 AM bypasses it entirely, not because the exhibit is poorly designed, but because the wave pressure configuration has routed the group around it. This is the core insight behind learning goals failure without pacing discipline: the leak is in the pacing conditions, not the exhibit content. The audit identifies whether the leak is in the exhibit design (the station is bypassed regardless of wave pressure configuration) or in the floor configuration (the station would be engaged with if the group were routed through it correctly). Those are different problems with different solutions.

The Field Trip Day Audit Framework

Think of the exhibit floor as a pressure pipe network, and a full field trip day as six sequential high-pressure fluid bursts moving through it. The audit documents where each burst flowed, where it didn't, and at what rates. A learning-goal leak is a pipe junction — a station with a mapped learning objective — where the burst consistently routed around rather than through. The audit makes those junctions visible across all six bursts, not just the one the docent happened to observe.

The audit framework has five components, mapped to the data that PressurePath collects automatically and the data that requires structured floor observation.

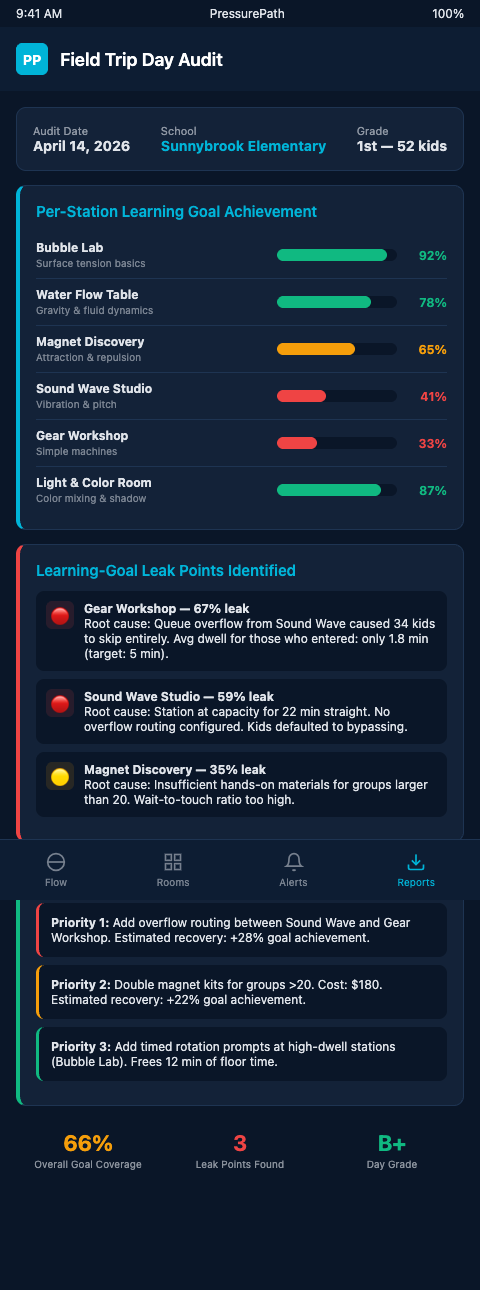

Component 1: Per-session pressure logs. For each of the six groups, PressurePath records the wave-pressure heat map — which zones the group concentrated in, which zones it bypassed, and how the pressure distribution shifted over the session duration. This component is automated; no additional floor data collection required.

Component 2: Station bypass rates by group. Per-station stop rates and average dwell times for each group, compared to grade-level historical baselines. Dexibit dashboards surface this data in the per-session view; the audit aggregates it across all six sessions to identify consistent versus situational bypass. This component is automated if station-level sensors are deployed.

Component 3: Chaperone script execution log. Did chaperones follow their routing assignments? A structured one-minute check-in with each chaperone at the end of the visit captures which routing cues they used, which they skipped, and whether conditional branches were triggered. This component requires a brief structured check-in protocol — not a survey, but a four-question card that a floor team member collects before the group exits. Pre-visit preparation and learning outcome research (Virginia Tech) confirms that highest outcomes require structured pre/post anchors; the chaperone script log is the post-visit anchor that closes the loop.

Component 4: Exhibit mechanism observation notes. For stations flagged by the bypass data, a 90-second observation note captures whether the mechanism was functioning correctly, whether signage was visible, and whether any environmental factor (lighting angle, adjacent activity noise) was affecting approach. This component requires one designated observer per flagged station, making it resource-intensive — best deployed for the three to four highest-priority bypass candidates, not all 14 stations simultaneously.

Component 5: Learning-goal coverage mapping. The synthesizing layer. Each exhibit station in your floor has a mapped learning objective. The goal-referenced exhibit evaluation methodology (ResearchGate) maps visitor behaviors back to intended objectives. For each group, the audit calculates what percentage of the group engaged with the station sufficiently to have plausibly encountered its learning objective (stop rate ≥15%, dwell ≥45 seconds). That percentage, aggregated across all six groups, is the learning-goal coverage rate for the day.

A Water Cycle puzzle with a stated NSF objective showing 11% learning-goal coverage across six third-grade groups is a grant accountability red flag. Not an impression — a number, with a source.

Advanced Audit Configurations

The first advanced layer is longitudinal leak tracking. A single day's audit produces one data point per station. Twelve weeks of audits produce 12 data points, and a pattern. Visitor Studies aggregated tracking benchmark data provides the SRI and DV benchmarks against which longitudinal data is compared: a station that has been below SRI threshold for six consecutive weeks is a structural redesign candidate with quantified evidence, not a subjective impression.

PressurePath accumulates audit data across field trip days and presents longitudinal bypass trends by station. The trend view reveals which stations are degrading over the season (accumulating bypass as exhibit novelty wears off for repeat-visitor districts) and which are improving (reflecting the impact of mid-visit refreshes or chaperone script updates).

The second advanced layer is pre/post comparison. The framework for exhibit design and evaluation links design inputs to learning outcomes — the structure that makes before/after comparisons valid. When a station receives a physical modification (new lever mechanism, repositioned signage, lighting adjustment), the audit records the bypass rate before and after across matched group profiles. That comparison is the evidence base for exhibit iteration.

The pacing conditions required to deliver learning goals — sufficient dwell time, appropriate group sizing, absence of wave collision — are exactly what the audit framework surfaces when they're missing. The audit reveals exactly where those conditions failed.

A 200-field-trip-day longitudinal record, as examined in the 200 field trip days station bypass analysis, produces the statistical power to distinguish floor-level patterns from group-level noise. The single-day audit is the data collection unit; the longitudinal analysis is what makes the data actionable for exhibit design decisions.

For performance review frameworks across 30 immersive theater productions, the dead-room audit methodology is directly parallel: rooms or zones that receive consistently low audience engagement across many performances are structural problems revealed only by longitudinal tracking. The children's museum equivalent is a consistently bypassed exhibit station across many field trip days — the audit makes that pattern visible.

Turning Audit Data Into Design Priorities

The most important output of a full field trip day audit is the redesign priority list. After eight to ten audit sessions, the data produces a clear ranking: which stations have structural bypass (consistent learning-goal leaks regardless of group profile or time slot), which have conditional bypass (leaks that appear with specific grade levels or arrival windows), and which are performing at or above benchmark.

Structural bypass candidates are the redesign priorities. Conditional bypass candidates are the scheduling and script-calibration priorities. Exhibit stations at or above benchmark are the model cases — the design team examines what they're doing right and whether those attributes can be extended to lower-performing stations.

That prioritization framework turns a full field trip day's worth of observations, alerts, and tracking data into a ranked action list. The structural redesign candidates get the exhibit team's attention; the conditional bypass candidates get scheduling adjustments and updated chaperone scripts; the benchmark stations get documented as design exemplars. PressurePath automates the data collection and ranking layers so the design team's time is spent on the analysis and decision-making rather than the aggregation.

Children's museum exhibit designers who run quarterly design reviews without this audit data are making redesign decisions based on staff impressions and aggregate attendance, which consistently misidentifies the Water Cycle puzzle as a mid-tier performer rather than a critical learning-goal leak. The audit changes that.

Audit the Day You Can Still Change

A field trip day audit completed the day of the visit produces actionable findings while the floor team's observations are still fresh, the chaperone scripts are still in hand, and the exhibit mechanisms are still configured. The same audit completed six weeks later from staff memory produces impressions.

The decay curve on audit data quality is steep. Observations made within an hour of the group's exit are detailed and specific; observations recorded from memory two days later are reduced to general impressions; observations attempted six weeks later are often wrong in ways that feel right to the staff member recalling them. That decay isn't a staff failure — it's a structural feature of human memory under operational pressure. The remedy is to build the audit synthesis into the workflow of the day itself, not the review meeting six weeks hence.

The practical implementation cost is modest. A structured end-of-day audit workflow typically takes 30 to 45 minutes of staff time when the collection infrastructure is in place. That's a small investment relative to the alternative — reconstructing field trip day patterns from incomplete notes and competing memories in a planning meeting weeks later. Museums that formalize the same-day audit workflow report that it becomes a natural rhythm within two or three weeks of adoption, not an additional burden imposed on an already-stretched floor team.

PressurePath automates the pressure log and bypass data components of the audit so the synthesis work — learning-goal coverage mapping, chaperone script evaluation, mechanism observation — is the only component requiring staff time on the day. If you run field trip programming and want a structured audit framework that produces exhibit-design-grade findings from each field trip day, join the PressurePath waitlist for children's museum exhibit designers.