Pacing Simulators vs Docent Instinct: Which Catches Bypass First

What Docent Instinct Actually Measures

The senior docent at your museum has accumulated four years of Thursday and Friday field trip observations. She knows which group configurations tend to cluster at the entrance corridor. She knows which stations lose attention after the first seven minutes. She knows the 10:15 AM slot reliably generates a chaotic first ten minutes and a calm final twenty. That knowledge is genuine and operationally useful.

What it cannot do is produce a bypass rate. A bypass rate requires a denominator: of the 32 kids who entered this session, how many stopped at the Water Cycle puzzle for at least 15 seconds? A docent observing from the atrium perimeter does not have line-of-sight to every station simultaneously. She registers the stations where kids are visibly clustered — the Electricity wall, the wind tunnel, the hands-on geology table. The stations where kids are absent are simply not drawing attention, and absence is not as visually salient as presence. The bypass is happening in the periphery.

V&A digital exhibit observational research found through systematic video observation that bypass patterns docents do not flag are consistently present in the data — not because docents are inattentive but because the visual field of a docent positioned for engagement facilitation is not the visual field needed to catch bypass. Those are different fields of view, both valid, serving different purposes.

Nielsen Norman Group research on observation in user studies reinforces the same principle in a parallel field: structured systematic observation catches behaviors that in-the-moment facilitation cannot, because the observer's position and attention are optimized for different purposes. The bypass is real and consistent; the docent's perimeter view simply doesn't register it.

This isn't a critique of docent attention or skill — it's an observation about geometric constraints. A docent positioned to facilitate engagement at a high-traffic station is, by that positioning, unable to simultaneously observe low-traffic stations. The two jobs require different locations. A pacing simulator doesn't require a location at all; it's reading sensor and badge data from all 14 stations simultaneously, every 30 seconds, regardless of where the floor team is standing. The comparison isn't about which is better in absolute terms — it's about what each tool can see from where it stands.

What Each Tool Actually Catches

The pressurized-water model makes the comparison concrete. A 30-kid school wave enters the museum as a high-pressure fluid burst. Docent instinct observes where the burst is flowing — the high-pressure zones where kids are visibly present and active. A pacing simulator tracks where the burst is not flowing: the stations receiving near-zero pressure, where bypass rates indicate the exhibit is acting like a closed valve that the fluid routes around.

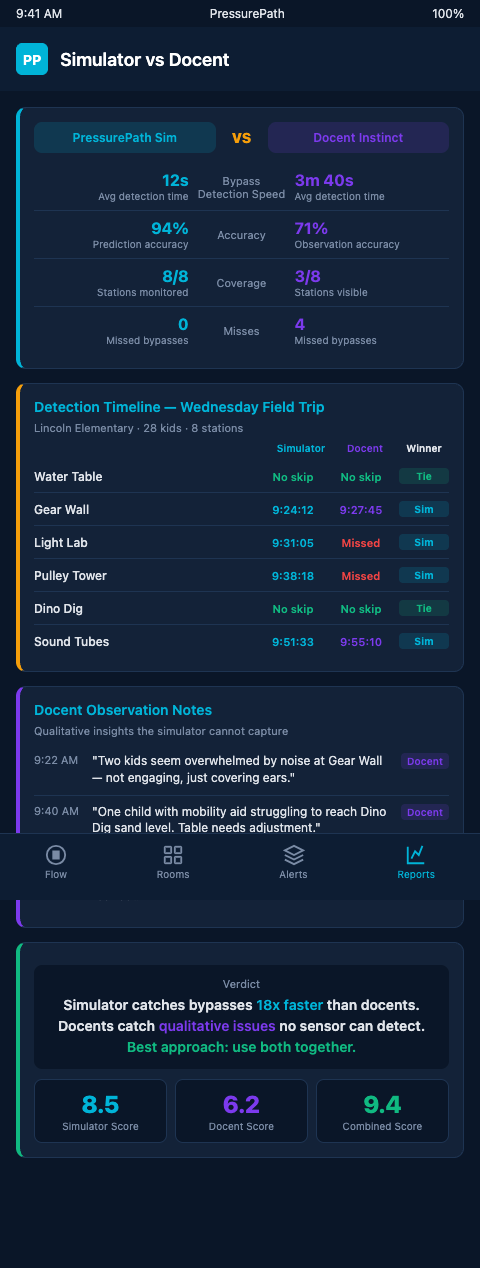

Both readings are correct. They're measuring different things. Docent instinct is high-resolution at the presence side: the experienced docent knows that the Electricity wall has 19 kids and the chaperones are standing to the left, which tells her the group configuration and the dwell pattern at that specific station. PressurePath is high-resolution at the absence side: the simulator knows that the Water Cycle puzzle has logged 4 stops from 32 kids in the last 22 minutes, which is a 12.5% stop rate against a third-grade historical baseline of 38%.

SenSource per-exhibit sensor data catches bypass patterns that perimeter docents miss at continuous cadence — a critical distinction. Docent observation is intermittent; a docent at the entrance registers the group on arrival and periodically checks on clusters. Sensor data is continuous; every 30 seconds, every station in the network reports its occupancy state. That continuity is what produces the bypass rate rather than the bypass impression.

Research on exhibit visitor metrics (ScienceDirect) supports the same quantitative framework — stop rate index (SRI) and diligent visitor (DV) rates — that docent observation cannot produce. An SRI benchmark of 300 means the average visitor spends three times the passing rate at a station. A DV rate of 25% means a quarter of visitors engage diligently rather than superficially. When a station falls below those benchmarks, the deficit is quantified, not sensed.

The question of which catches bypass first has a clean answer for structural bypass — the kind that persists across multiple groups, multiple days, multiple docents. Structural bypass at the Water Cycle puzzle shows up in tracking data after two or three group sessions. It might take a docent eight weeks to form a tentative impression that "kids seem to skip that section," and that impression may not survive a single atypical group that clusters there unexpectedly.

For in-session bypass — a single group bypassing a station during an ongoing field trip — the comparison is closer. An experienced docent who is actively monitoring the floor can notice in real time that "no one is at the Water Cycle puzzle." PressurePath flags the same condition through a stop-rate alert threshold, surfacing the bypass to the floor team's dashboard rather than requiring a docent to have the right line-of-sight at the right moment.

For engagement tracking tools, the practical division of labor is clear: docent instinct handles real-time facilitation at the presence zones where the docent is effective; the simulator handles continuous bypass monitoring at the absence zones where docent coverage is structurally limited.

Advanced Configurations: Combining Both

The most effective children's museum floor operations use docent instinct and pacing simulators as complementary rather than competing tools. The simulator surfaces the bypass pattern; the docent interprets it and acts on it. A dashboard alert that flags "Water Cycle puzzle: 14% stop rate over last 30 minutes" triggers a docent to reposition and investigate — but the docent's judgment about whether the low stop rate reflects exhibit placement, group profile, or a mechanical issue with the lever mechanism is not something the simulator produces.

Informalscience.org research on attracting and holding power establishes the distinction between low attracting power (visitors approach but don't stop) and low holding power (visitors stop briefly and leave). A pacing simulator tracking stop rate and average dwell time distinguishes between those two failure modes; docent instinct rarely does. Low attracting power suggests a placement or signage problem. Low holding power suggests a content or mechanism problem. The response is different in each case.

From immersive theater sim versus usher instincts finds the same division: ushers read room energy and make real-time facilitation calls; simulators track scene density patterns across 30 performances and surface structural audience routing issues. Children's museum exhibit designers who've worked with that framing recognize the parallel immediately.

The integration for educator dashboards that surface bypass patterns closes the loop: the simulator feeds the dashboard with continuous station data, the dashboard surfaces the actionable signals to the floor team, and the docents act on those signals with the real-time judgment that no simulator replaces.

When Docent Instinct Outperforms the Simulator

There are specific situations where an experienced docent's read of the floor is faster and more useful than any data the pacing simulator produces in real time. The first is mechanical failure detection: a docent who notices that a third-grader pulled the Water Cycle lever and it didn't move — a stuck mechanism — immediately flags a maintenance need. The simulator registers low dwell time at that station, which looks identical to disinterest. The docent disambiguates the signal that the simulator cannot.

The second is group-dynamic reading. A docent who observes that the 10:15 AM group's two sub-groups are in conflict — kids from one sub-group are following kids from the other, creating an unplanned consolidation — can intervene in real time with a social-dynamic response that no simulator models. Chaperone script adjustments, sub-group separation, or a redirecting activity all require the kind of situational reading that proximity enables.

The third is accessibility accommodation. A docent working with a group that includes kids with mobility differences or sensory sensitivities notices and responds to those needs in ways that sensor-based tracking cannot detect. The docent's presence is the accommodation; the simulator's data is irrelevant to that function.

PressurePath is designed to augment docent capacity, not replace it. The simulator handles the monitoring functions that require simultaneous multi-station coverage and quantitative baseline comparison. The docent handles the interpretation and response functions that require presence, judgment, and human interaction. Children's museum exhibit designers who understand that division get more from both.

The Right Question for Each Tool

The combination that produces the best outcomes is neither "docents only" nor "simulator only" — it's a defined division of labor where each tool handles the functions it's structurally suited for. The docent manages facilitation, accessibility accommodation, mechanical anomaly detection, and real-time social dynamics. PressurePath manages continuous multi-station bypass monitoring, quantitative baseline comparison, structural bypass pattern detection, and alert routing.

Where that division breaks down in practice is when organizations lack confidence in the simulator's outputs and default back to docent instinct for all decisions — including bypass detection — because the instinct feels more concrete. The Visitor Studies benchmark research provides the anchor that makes the simulator's outputs concrete: when a station's stop rate index falls below the SRI benchmark of 300, that's a specific, defined deficit, not an impression. It means the average visitor is spending less than three times the passing rate at that station. That number is as concrete as a temperature reading, and it's available from data that docent instinct cannot produce.

Docent instinct answers: "What's happening right now with this group, at the stations where kids are present?" PressurePath answers: "Which stations are being bypassed, at what rate, by which group profiles, and how does that compare to your historical baseline?"

Those are different questions. For children's museum exhibit designers evaluating their grant-funded stations, the second question is the one that determines whether a $180K NSF exhibit is earning its floor space. If you want quantified bypass data — not impressions, not survey scores, not anecdotal feedback — join the PressurePath waitlist and see what your current field trip data reveals at the station level.